Chapter 6 Explaining two-picture change blindness

For this unit, the term change blindness refers to the failure to notice changes in animations that alternate between two pictures of a scene. Later we will also talk about other situations in which people miss changes, but this chapter focuses on the two-picture alternation animations. In previous years, you probably already saw some of those amazing demonstrations. Here, however, we will learn somewhat different lessons than what you learned before.

First you need to realize that when we view a scene, we typically remember very few details about it. That’s true even when we actively try to memorize the contents of the scene. When watching this movie, please scrutinise the scene carefully.

Wasn’t that amazing? In some ways, we humans are a lot dumber than we think! People usually don’t notice any of the several changes made to the scene. We’ll circle back to this Whodunnit movie, but first let’s talk about a case that may be even more striking.

6.1 Blindness for gradual changes

In the Whodunnit movie, the changes occurred off-screen, when the camera was focused tightly on the detective on the left. One might expect that if the changes happened right in front of your eyes, you would notice them.

Amazingly, we fail to notice changes right in front of us, too, if they happen very gradually, as illustrated in this movie.

6.1.1 The “grand illusion of visual experience”

Most people are surprised by the blindness for changes in the gradual-change movie. Many researchers were very surprised, too, and some concluded that there is a grand illusion of visual experience.

This is the claim that while people think that they are simultaneously experiencing the whole visual field, they are wrong about that - it is an illusion. These researchers explain change blindness with the claim that at any one time, you are only experiencing a small portion of the visual field, parts that you are particularly attending to. In other words, these researchers claim that visual experience is subject to a strong bottleneck.

However, this conclusion that there is a bottleneck on visual experience may be premature. To understand why, we need to consider in more detail what the failure to notice changes might mean. We need to consider the processing that’s needed to detect a change.

6.1.2 What is needed to detect a change?

Let’s consider what might be involved in detecting the change of an object or part of a scene:

- Internal representations of the object before and after the change.

- A process that compares compares the before representation to the after.

- A process that calls attention to, or brings into conscious awareness, the instances of change.

Apparently, at least one of the above three processes is lacking. Let’s consider #1 first. It is the case that all incoming retinal signals across the scene get processed. Unfortunately, however, if the object is in the periphery, the retinal signals may not be high-resolution enough for the representation to be different before and after the change. This is because vision is low resolution in the periphery (4).

For many real-world scenes, then, #1 above is sufficient to explain why people don’t notice changes. The internal representation of the object is too low in fidelity. However, this is not enough to explain all failures to notice changes. Even when researchers create displays in which all the objects are big enough and widely-spaced enough to see in the periphery, still people miss many changes. For example, the changes are large enough in some classic demonstrations like this boat scene.

So, #2 or #3 or both are lacking. This is likely due to a bottleneck. These processes are limited in capacity, so they cannot simultaneously process all objects in the visual scene. And what about the “grand illusion of visual experience?” Well, it seems quite possible that we may have experience of objects without having processes that correspond to #2 and #3. In other words, the conclusion that there is a grand illusion of visual experience may be a hasty one (Noë, Pessoa, and Thompson (2000)). When people are surprised by change blindness, their mistake may be failing to realise that there’s various processes required to notice a change, and visual experience may not always involve those.

Only a finite number of neurons can fit in our head, and evolution seems to not have prioritized processing of #2 and #3. The brain has not devoted neurons to constantly comparing what you’re seeing now to what you saw half a second ago. Comparing what was present at two different times requires the limited resources of attention to be at that location at the two different times. We don’t know why evolution did not prioritize these, but one possibility is that a full comparison process (#2) would require a lot of neurons, and animals like us have been able to get by with other, simpler processes, which we will discuss next.

6.2 Bottom-up attention and flicker/motion detectors

While limited capacity means we can’t fully process the whole visual scene simultaneously for changes, brains have evolved some simple tricks that help us catch many changes. One of these is that our brains have flicker or motion detectors that do simultaneously process every part of the scene.

At my home, mounted high in the corner of the carport, is an inexpensive motion detector. This device is wired such that if it detects motion, the carport light comes on. There is nothing fancy about the processing within it - not much circuitry is required for it to work.

At my home, mounted high in the corner of the carport, is an inexpensive motion detector. This device is wired such that if it detects motion, the carport light comes on. There is nothing fancy about the processing within it - not much circuitry is required for it to work.

When done by neurons, too, crude motion detection doesn’t require much work or energy (you can learn more about this in PSYC3013). In one or more of the visual retinotopic maps located in our brains, each bit of the map has flicker/motion detectors sitting there that ordinarily fire as soon as something happens in the scene. Specifically, sudden disappearance or sudden appearance of an object will make these flicker/motion detectors fire.

Firing of those flicker/motion detectors can call attention to a location. This is an instance of bottom-up attention (5). Thus, the brain uses bottom-up attention as a work-around: the flicker or motion ordinarily caused by a change summons attention to a location, and then more limited-capacity processes work out what’s changing there.

In summary, we have evolved to process simultaneously across the scene only a few things. Two of these things are flicker and motion. Thus, detecting motion and flicker is NOT capacity-limited. We rely on this to signal the locations where something is happening.

Very gradual changes do not trigger our flicker/motion detectors. But when changes are sudden, this will stimulate our motion or flicker detectors, which in many circumstances will call attention to the associated location.

These facts about the brains of humans and other animals are one reason that animals stay very still when they are worried about predators. Thanks to their camouflage, many animals can be hard to notice when they’re not moving, but as soon as they move, they’re quite conspicuous (watch this) and predators’ attention goes straight to them.

However, what happens if motion or flicker occurs in multiple places? It won’t be clear which of the associated locations attention should go to. O’Regan, Rensink, and Clark (1999) have demonstrated how this can enable changing blindness with a display feature that they called “mud splashes” - see Traffic with splashes and Traffic without splashes. The idea is that these movies might resemble the situation if splashes of a puddle hit your windshield while you are driving - the splash would trigger your motion detectors and thereby call your attention, preventing your attention from going the location of potentially-important other changes.

Broader background motion can also present a problem - if everything in the scene is moving, then our motion detectors are stimulated everywhere and attention may not go to the location of a change.

Now you have all the knowledge you need to understand the full explanation, in the next section, of why people take a long time to find the change in the classic change blindness animations.

6.3 Two-picture change blindness

Many of you have seen animations like that of the boat scene or this Paris scene, which sandwich a blank screen in between the two versions of the picture. It’s like a blank screen sandwich! The two pictures of the scene are analogous to the chocolate biscuits and the ice cream is analogous to the blank screen… yum.

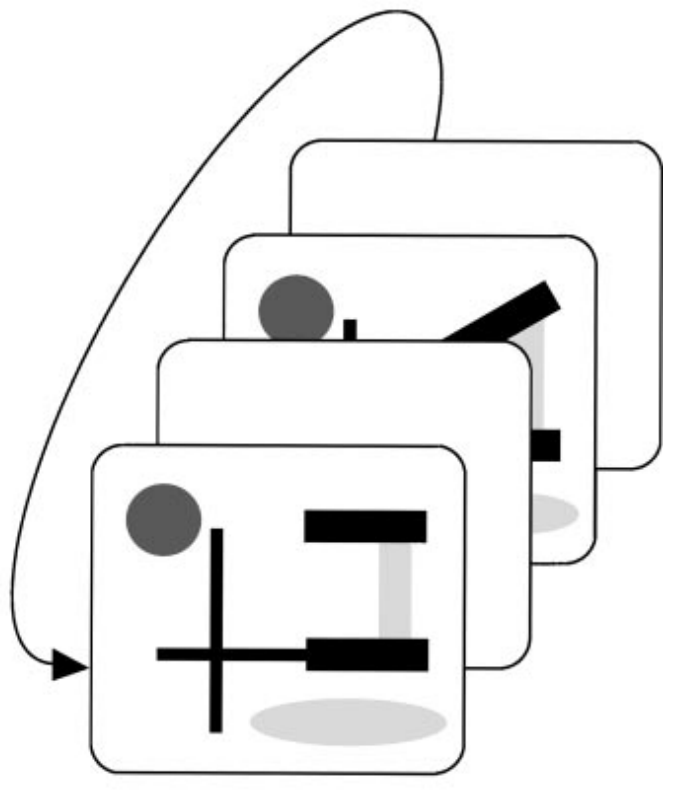

Here is a schematic of the timeline of a blank screen sandwich.

The blank screen is critical - it creates flicker everywhere in between the two frames. That is, when the picture of the scene is replaced by the blank scene, it creates a flicker signal everywhere, and then flicker everywhere again when the second scene comes on.

When the blank screen is removed from a blank screen sandwich, the scene change is conspicuous; this animation is an example. In it, the only location that tickles your transient detectors is that of the change. As a result, your attention goes straight to the location of the change.

Without the blank screen, the only location of flicker was the location of the changing object. The flicker called your attention to that location. With the blank screen, there’s flicker everywhere, so there is no indication of which of the many locations contains the change.

Then how do people ever find the change? We will return to this topic at the end of Chapter 9.

6.4 When change is everywhere but you still can’t see it

A blank-screen sandwich isn’t the only way to prevent motion or flicker signals from signalling to the brain that an object has changed.

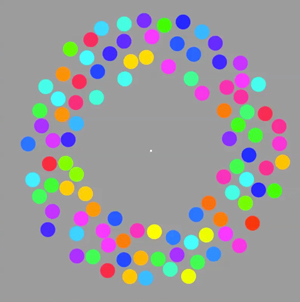

Adding motion to an object can make the fact that the object is changing in other ways inconspicuous. In a phenomenon that Suchow and Alvarez (2011) dubbed “motion silencing”, in certain circumstances, even when every dot in a display is changing color, those changes aren’t noticeable if the color-changing dots are moving.

View the motion silencing animation here.

View the motion silencing animation here.

Jun Saiki & I showed that a key requirement for motion silencing to work is that the amount of each color stays approximately the same before and after the changes (Saiki and Holcombe 2012). As an extreme example, if 80% of the dots changed to green, participants noticed that change. This shows that people continuously monitor, to some extent, the distribution of colors present, and a large change in this distribution is noticed. This is sometimes referred to as a representation of the scene statistics.

Another thing that can break change blindness are changes to the overall meaning of the scene. For example, if you were viewing a scene of a living room, and suddenly the furniture was re-arranged to resemble a lecture theatre, you’d be very likely to notice that. Like the distribution of colors, the “gist” or overall meaning of a scene seems to be continuously encoded and monitored for change.

To represent the existence of the less spatially-selective, global gist processing that occurs, Wolfe et al. (2011) created the figure below.

The non-selective pathway is more difficult to study and there’s still a lot about it that researchers don’t yet understand. However, we know that our understanding of the meaning or gist of a scene helps guide attention, for example if looking for keys in our car we will look around the ignition and around the seat, places the keys are most likely to end up.

The non-selective pathway is more difficult to study and there’s still a lot about it that researchers don’t yet understand. However, we know that our understanding of the meaning or gist of a scene helps guide attention, for example if looking for keys in our car we will look around the ignition and around the seat, places the keys are most likely to end up.

6.5 Exercises

Answer these questions and relate them to the first four, and the last, learning outcome (2):

- Why can classic change blindness animations be described as a “blank screen sandwich”?

- Why are gradual changes hard to detect?

- What effect do splashes and other irrelevant sudden changes in a scene have on our ability to detect important changes? How do they have that effect?